|

| Fig. 1a |

|

| Fig. 1b |

I. Background

This series is about what to do about the dearth of in situ measurements of the whole ocean, top to bottom (Oceans: Abstract Values vs. Measured Values, 2, 3).

The issue, including what to do about it, has been addressed in the scientific literature:

"Prior to 2004, observations of the upper ocean were predominantly confined to the Northern Hemisphere and concentrated along major shipping routes; the Southern Hemisphere is particularly poorly observed. In this century, the advent of the Argo array of autonomous profiling floats ... has significantly increased ocean sampling to achieve near-global coverage for the first time over the upper 1800 m since about 2005. The lack of historical data coverage requires a gap-filling (or mapping) strategy to infill the data gaps in order to estimate the global integral of OHC."(Ocean Science 2016, Cheng et alia, emphasis added; PDF here). Going back a bit further, the issue came up in another paper:

"A compilation of paleoceanographic data and a coupled atmosphere-ocean climate model were used to examine global ocean surface temperatures of the Last Interglacial (LIG) period, and to produce the first quantitative estimate of the role that ocean thermal expansion likely played in driving sea level rise above present day during the LIG. Our analysis of the paleoclimatic data suggests a peak LIG global sea surface temperature (SST) warming of 0.7 ± 0.6°C compared to the late Holocene. Our LIG climate model simulation suggests a slight cooling of global average SST relative to preindustrial conditions (ΔSST = −0.4°C), with a reduction in atmospheric water vapor in the Southern Hemisphere driven by a northward shift of the Intertropical Convergence Zone, and substantially reduced seasonality in the Southern Hemisphere. Taken together, the model and paleoceanographic data imply a minimal contribution of ocean thermal expansion to LIG sea level rise above present day. Uncertainty remains, but it seems unlikely that thermosteric sea level rise exceeded 0.4 ± 0.3 m during the LIG. This constraint, along with estimates of the sea level contributions from the Greenland Ice Sheet, glaciers and ice caps, implies that 4.1 to 5.8 m of sea level rise during the Last Interglacial period was derived from the Antarctic Ice Sheet. These results reemphasize the concern that both the Antarctic and Greenland Ice Sheets may be more sensitive to temperature than widely thought."(The role of ocean thermal expansion, AGU, emphasis added). Basically, the scientists point out that this exercise is not a picnic:

"The oceans present myriad challenges for adequate monitoring. To take the ocean’s temperature, it is necessary to use enough sensors at enough locations and at sufficient depths to track changes throughout the entire ocean. It is essential to have measurements that go back many years and that will continue into the future.(The Most Powerful Evidence, Inside Climate News, emphasis added). That quote contains a reference to "Cheng et al. 2017" which contains the following statement:

...

Since 2006, the Argo program of autonomous profiling floats has provided near-global coverage of the upper 2,000 meters of the ocean over all seasons [Riser et al., 2016]. In addition, climate scientists have been able to quantify the ocean temperature changes back to 1960 on the basis of the much sparser historical instrument record [Cheng et al., 2017]."

"In this paper, we extend and improve a recently proposed mapping strategy (CZ16) to provide a complete gridded temperature field for 0- to 2000-m depths from 1960 to 2015.(Improved estimates of ocean heat content from 1960 to 2015, Cheng et al, 2017). In other words, it has not yet been accomplished.

...

The success of a mapping method can be judged by how accurately it reconstructs the full ocean temperature domain. When the global ocean is divided into a monthly 1°-by-1° grid, the monthly data coverage is [less than]10% before 1960, [less than]20% from 1960 to 2003, and [less than]30% from 2004 to 2015 (see Materials and Methods for data information and Fig. 1)."

That is where the Dredd Blog criticism of "thermal expansion is the major cause of sea level rise in the past century or so" comes from (On Thermal Expansion & Thermal Contraction, 2, 3, 4, 5, 6, 7, 8, 9, 10, 11, 12, 13, 14, 15, 16, 17, 18, 19, 20, 21, 22, 23, 24, 25, 26, 27).

II. An Abstract Observation

Now, let's consider that "gap-filling (or mapping) strategy" exercise (mentioned in the last quote above, Cheng et al, 2017).

The problem has been approached here at Dredd Blog by developing a software program I call an abstract pattern generator. which produces WOD patterns using data in the WOD documentation.

|

| Fig. 2a |

|

| Fig. 2b |

The upper pane of Fig. 1a is a graph generated from data of the Permanent Service for Mean Sea Level (PSMSL).

It details sea level rise (SLR) at the "Golden 23" tide gauge stations (185.157 mm of SLR).

The lower pane is a graph of the abstract pattern of thermal expansion over the same time frame (25.019 mm of SLR).

In other words, the abstract thermal expansion pattern shows that thermal expansion is only 13.5% of total SLR (25.019 ÷ 185.157 = 0.135123166 or 13.5%) during that time frame, which means that it is not a major portion of global sea level rise, because those numbers also mean that 86.5% of SLR is caused by ice sheet and land glacier melt water.

The abstraction calculation is based on World Ocean Database (WOD) data in their official documentation, depicted in part at Fig. 2a and Fig. 2b, which is a portion of "APPENDIX 11. ACCEPTABLE RANGES OF OBSERVED VARIABLES AS A FUNCTION OF DEPTH, BY BASIN" (see Appendix 11, page 132, of The WOD Manual, PDF).

The gist of Appendix 11 is to show maximum and minimum values at all ocean depths in all ocean basins around the globe.

By adding the maximum and minimum values together, then dividing by 2 (at each depth of each ocean basins), the software is then ready for the next step, which is to conform those values to GISTEMP constraints.

By "GISTEMP constraints" I mean adjusting those mean average Appendix 11 values by the GISTEMP anomaly pattern.

That is done by multiplying the WOD values by 0.93 (93% of that GISTEMP anomaly value becomes the temperature anomaly value in each ocean basin at each depth).

That is because scientists tell us that some 93% of heat trapped by green house gases ends up in the oceans.

So, by fusing that GISTEMP anomaly pattern to the abstract temperature pattern made by the WOD data, we have a pattern which we can use to generate Thermodynamic Equation Of Seawater (TEOS) patterns (e.g. Golden 23 Zones Meet TEOS-10).

III. Using Abstract Patterns With TEOS-10

Using abstract WOD data to generate TEOS values is done by the same process as using in situ ocean temperature and salinity measurements (The Art of Making Thermal Expansion Graphs).

To conform either in situ temperature and salinity measurements or abstract temperature and salinity values, one uses the TEOS functions (the difference is that the abstract values have been conformed to the GISTEMP pattern as stated in Section II above).

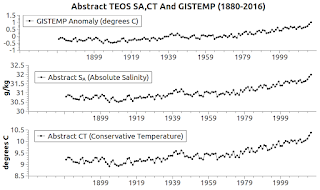

The graph at Fig. 1b shows the resulting TEOS Conservative Temperature (CT) and Absolute Salinity (SA) patterns that emerge on an annual basis from 1880 - 2016 when one uses this technique.

From that, we then can then generate the thermal expansion coefficient and the thermosteric volume change.

From that thermosteric volume change we can calculate the sea level change (SLC) as shown in Fig. 1a.

Using the WOD manual data for all 30 ocean basins around the globe, and all 33 depths in each of those ocean basins, forms a pattern against which we can judge the general completeness and general accuracy of our in situ measurements.

It also helps us to select a "Golden 23" group of areas that mirror the whole ocean (On Thermal Expansion & Thermal Contraction - 28).

IV. Comparing In Situ Measurement Patterns

With Abstract Calculated Patterns

With Abstract Calculated Patterns

So now we can talk about the current techniques of using what is described as skimpy in situ measurements (down to only about 2,000 m depth, when the average ocean basin depth is 3,682.2 m ... ~50% not used) to do the estimations all of the science team authors wrote about.

They pointed out that we have to use estimations in any case, because the datasets are incomplete in various places for various reasons, from dangerous conditions to weaker technology in times past.

To me, incomplete data is a bad place to start, having realized that the in situ measurements, although quite accurate and plentiful, are a patchwork of convenience-based expeditions that can make it difficult to see the entire picture.

I mean the total picture which must be constructed from outside the convenience zone of only expeditions to safe and warm global ocean areas.

That is why I hypothesize that it is better to start with an abstract pattern which matches the pattern made by our historically complete datasets (e.g. GISTEMP & PSMSL).

V. Conclusion

"He say one and one and one is three ... come together ..."

The next post in this series is here, the previous post in this series is here.

The use of the description "king tides" is a form of denial (‘King tides’ are rising).

ReplyDeleteThe discussion "how high" and "how soon" is more germane for a realistic comprehension of SLC.